Introduction to turbulence/Stationarity and homogeneity

From CFD-Wiki

(→The temporal Taylor microscale) |

(→The temporal Taylor microscale) |

||

| Line 135: | Line 135: | ||

\rho \left( \tau \right) = 1 + \frac{1}{2} \rho '' \left( 0 \right) \tau^{2} + \cdots | \rho \left( \tau \right) = 1 + \frac{1}{2} \rho '' \left( 0 \right) \tau^{2} + \cdots | ||

</math> | </math> | ||

| - | </td><td width="5%">( | + | </td><td width="5%">(14)</td></tr></table> |

Since <math> \rho \left( \tau \right) </math> has its maximum at the origin, obviously <math> \rho'' \left( 0 \right) </math> must be negative. | Since <math> \rho \left( \tau \right) </math> has its maximum at the origin, obviously <math> \rho'' \left( 0 \right) </math> must be negative. | ||

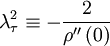

We can use the correlation and its second derivative at the origin to ''define'' a special time scale, <math> \lambda_{\tau} </math> (called the Taylor microscale) by: | We can use the correlation and its second derivative at the origin to ''define'' a special time scale, <math> \lambda_{\tau} </math> (called the Taylor microscale) by: | ||

| + | |||

| + | <table width="70%"><tr><td> | ||

| + | :<math> | ||

| + | \lambda^{2}_{\tau} \equiv - \frac{2}{\rho'' \left( 0 \right)} | ||

| + | </math> | ||

| + | </td><td width="5%">(15)</td></tr></table> | ||

| + | |||

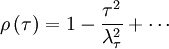

| + | Using this in equation 14 yields the expansion for the correlation coefficient near the origin as: | ||

| + | |||

| + | <table width="70%"><tr><td> | ||

| + | :<math> | ||

| + | \rho \left( \tau \right) = 1 - \frac{\tau^{2}}{\lambda^{2}_{\tau}} + \cdots | ||

| + | </math> | ||

| + | </td><td width="5%">(16)</td></tr></table> | ||

| + | |||

| + | Thus very near the origin the correlation coefficient (and the autocorrelation as well) simply rolls off parabolically; i.e., | ||

Revision as of 07:13, 6 January 2008

Contents |

Processes statistically stationary in time

Many random processes have the characteristic that their statistical properties do not appear to depend directly on time, even though the random variables themselves are time-dependent. For example, consider the signals shown in Figures 2.2 and 2.5

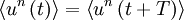

When the statistical properties of a random process are independent of time, the random process is said to be stationary. For such a process all the moments are time-independent, e.g.,  , etc. In fact, the probability density itself is time-independent, as should be obvious from the fact that the moments are time independent.

, etc. In fact, the probability density itself is time-independent, as should be obvious from the fact that the moments are time independent.

An alternative way of looking at stationarity is to note that the statistics of the process are independent of the origin in time. It is obvious from the above, for example, that if the statistics of a process are time independent, then  , etc., where

, etc., where  is some arbitrary translation of the origin in time. Less obvious, but equally true, is that the product

is some arbitrary translation of the origin in time. Less obvious, but equally true, is that the product  depends only on time difference

depends only on time difference  and not on

and not on  (or

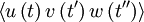

(or  ) directly. This consequence of stationarity can be extended to any product moment. For example

) directly. This consequence of stationarity can be extended to any product moment. For example  can depend only on the time difference

can depend only on the time difference  . And

. And  can depend only on the two time differences

can depend only on the two time differences  and

and  (or

(or  ) and not

) and not  ,

,  or

or  directly.

directly.

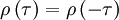

The autocorrelation

One of the most useful statistical moments in the study of stationary random processes (and turbulence, in particular) is the autocorrelation defined as the average of the product of the random variable evaluated at two times, i.e.  . Since the process is assumed stationary, this product can depend only on the time difference

. Since the process is assumed stationary, this product can depend only on the time difference  . Therefore the autocorrelation can be written as:

. Therefore the autocorrelation can be written as:

|

| (1) |

The importance of the autocorrelation lies in the fact that it indicates the "memory" of the process; that is, the time over which is correlated with itself. Contrast the two autocorrelation of deterministic sine wave is simply a cosine as can be easily proven. Note that there is no time beyond which it can be guaranteed to be arbitrarily small since it always "remembers" when it began, and thus always remains correlated with itself. By contrast, a stationary random process like the one illustrated in the figure will eventually lose all correlation and go to zero. In other words it has a "finite memory" and "forgets" how it was. Note that one must be careful to make sure that a correlation really both goes to zero and stays down before drawing conclusions, since even the sine wave was zero at some points. Stationary random process always have two-time correlation functions which eventually go to zero and stay there.

Example 1.

Consider the motion of an automobile responding to the movement of the wheels over a rough surface. In the usual case where the road roughness is randomly distributed, the motion of the car will be a weighted history of the road's roughness with the most recent bumps having the most influence and with distant bumps eventually forgotten. On the other hand, if the car is travelling down a railroad track, the periodic crossing of the railroad ties represents a determenistic input an the motion will remain correlated with itself indefinitely, a very bad thing if the tie crossing rate corresponds to a natural resonance of the suspension system of the vehicle.

Since a random process can never be more than perfectly correlated, it can never achieve a correlation greater than is value at the origin. Thus

|

| (2) |

An important consequence of stationarity is that the autocorrelation is symmetric in the time difference  . To see this simply shift the origin in time backwards by an amount

. To see this simply shift the origin in time backwards by an amount  and note that independence of origin implies:

and note that independence of origin implies:

|

| (3) |

Since the right hand side is simply  , it follows immediately that:

, it follows immediately that:

|

| (4) |

The autocorrelation coefficient

It is convenient to define the autocorrelation coefficient as:

|

| (5) |

where

|

| (6) |

Since the autocorrelation is symmetric, so is its coefficient, i.e.,

|

| (7) |

It is also obvious from the fact that the autocorrelation is maximal at the origin that the autocorrelation coefficient must also be maximal there. In fact from the definition it follows that

|

| (8) |

and

|

| (9) |

for all values of  .

.

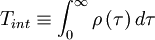

The integral scale

One of the most useful measures of the length of a time a process is correlated with itself is the integral scale defined by

|

| (10) |

It is easy to see why this works by looking at Figure 5.2. In effect we have replaced the area under the correlation coefficient by a rectangle of height unity and width  .

.

The temporal Taylor microscale

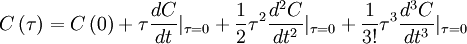

The autocorrelation can be expanded about the origin in a MacClaurin series; i.e.,

|

| (11) |

But we know the aoutocorrelation is symmetric in  , hence the odd terms in

, hence the odd terms in  must be identically to zero (i.e.,

must be identically to zero (i.e.,  ,

,  , etc.). Therefore the expansion of the autocorrelation near the origin reduces to:

, etc.). Therefore the expansion of the autocorrelation near the origin reduces to:

|

| (12) |

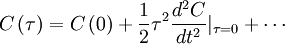

Similary, the autocorrelation coefficient near the origin can be expanded as:

|

| (13) |

where we have used the fact that  . If we define

. If we define  we can write this compactly as:

we can write this compactly as:

|

| (14) |

Since  has its maximum at the origin, obviously

has its maximum at the origin, obviously  must be negative.

must be negative.

We can use the correlation and its second derivative at the origin to define a special time scale,  (called the Taylor microscale) by:

(called the Taylor microscale) by:

|

| (15) |

Using this in equation 14 yields the expansion for the correlation coefficient near the origin as:

|

| (16) |

Thus very near the origin the correlation coefficient (and the autocorrelation as well) simply rolls off parabolically; i.e.,

![\left\langle u^{2} \right\rangle = \left\langle u \left( t \right) u \left( t \right) \right\rangle = C \left( 0 \right) = var \left[ u \right]](/W/images/math/3/2/e/32ed8634122f97dbcde9f2e5a9b03c7e.png)